I am writing this post to explain the core concepts of computer architecture, which is the worldview* in this IT era, in an easy-to-understand way.

- I used the word, worldview, which means the basic beliefs, values, ways of thinking, and guiding principles that shape one’s approach to conceptualizing and bringing something into existence, whether it’s a product, business, art, or other endeavor. They get at the core ideology and perspective that informs the creative process and resulting work.

Why computer architecture?

In fact, the concept of computer architecture can be applied to society and, more importantly, can be practically applied to management as well. The concept of operations management, famous at MIT Sloan School of Management, is actually very close to this concept of computer architecture. This is why when I found out that Vivek, a professor who teaches operations management at Sloan School, used to be a flash memory controller designer in the past, I had a strong sense of “of course”.

For your information, I was in charge of a system that had to process a huge amount of multimedia data in real-time within the system. The goal of this post is to roughly convey what this means towards the end of the post, if I describe well enough.

The concept of computer architecture is the worldview of all devices and SW codes deeply embedded in our lives. It dominates almost all aspects of our daily lives, from the obvious smartphones, home appliances, and cars to all the websites we use on a daily basis.

Therefore, by understanding the basics of this worldview and how it operates, you can interpret inconveniences encountered in your life much easier than you think, and with repetition, you may can even predict the cause of the problem easily.

In today’s world, where almost everything is built upon computer architecture concepts, people who understand its worldview can recognize the big changes and opportunities that were once bottlenecked. In this post, I will briefly include the historical transforming moments related to it.

May know how to program(python, R,.) but..

Before delving deep into it, let me explain the high-level programming languages and computer architecture concepts that many people find confusing.

Since the late 2000s, high-level programming languages have been gaining popularity. Here, “high level” does not refer to difficulty or level, but rather to the level of abstraction. Instead of compromising performance, the focus has been on removing the complexity of control for faster and easier implementation.

- A high-level language is a programming language that is designed to be easy for humans to read and write. These languages are typically more abstract and closer to human language than low-level languages, making them easier to understand and use for programming tasks. Examples of high-level languages include Python, Java, and C++.

This is partly due to the fact that the compromises made in software languages were complemented by improvements in chip performance thanks to Moore’s Law, and also because there was a growing demand in the market for a variety of applications that were rapidly changing, leading to a greater value on time to market. The development of communication infrastructure following 3G and LTE played a significant role in driving this demand as well.

The language Python, widely used by many people recently, is a good example of a high-level language. To help understand, let’s compare it to the relatively lower-level language C in general; C is a quite high-level language for me though. Whether using Python or C for data processing using arithmetic operations, the difference lies in just the syntax. The most challenging aspect of using C is memory control. When using Python, you don’t have to worry about the bit width of the data you are handling, but in C, if you exceed the pre-specified bit width of the data during arithmetic operations, errors will occur.

For example, the result of adding two 10-bit numbers requires an 11-bit width, and multiplying two 10-bit numbers requires a 20-bit data basically.(It can be optimized more based on data’s min-max range) In other words, you need to manage the memory size used at each operation step. Thus, in the past, the memory size used varied significantly depending on the programming skills. Engineers who were not confident in controlling complex operation steps allocated a large amount of memory. However, memory usage is directly related to the overall computer performance and cost, and in the past, using memory in the MB range was unimaginable, so everything had to be managed. But no one worries about memory allocation when using Python.

So, what is the difference between high-level programming language and computer architecture concepts? Each programming language is a various language for running a computer, so every language necessarily encompasses the computer architecture worldview, but as you move towards high-level languages, it becomes more difficult to intuitively understand the computer architecture concept.

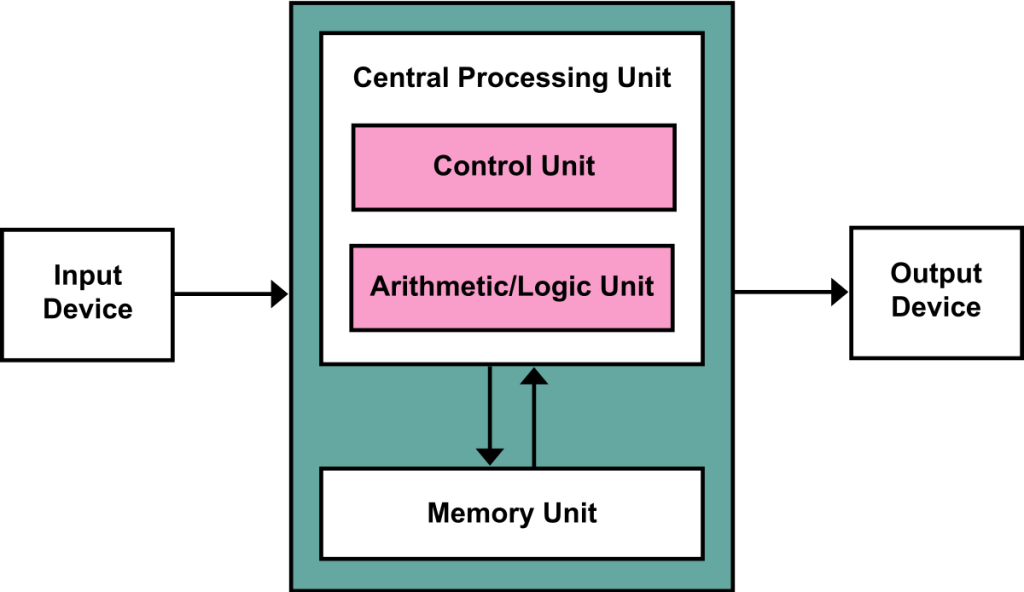

This is a von Neumann architecture image from Wikipedia, which is a fundamental architecture for all current computer systems. It has an Input/Output, Memory Unit, and CPU (Control Unit and Arithmetic/Logic Unit). This framework can be applied to every task, including human beings and their groups, societies, and organizations.

I can imagine a fractal structure of this architecture within an organization!

Python language is used in many fields. With the strengths of powerful and rich libraries, it is used in research areas for calculating complex equations, and it can also be used to develop simple apps. As mentioned earlier, its purpose is to reduce complexity and make the development of applications and functions easy and fast, so in terms of computer architecture, only the Arithmetic/Logic unit is clearly visible to the user, while other parts are not visible.

Therefore, those who have only used high-level languages may have learned a programming language, but they have no understanding of how computers actually work, and they simply feel like they have translated into another language with syntax such as =, {}, ().

In other words, if you have a concept of high-level programming languages, you can say that you are familiar with ALU(Arithmetic/Logic Unit). Don’t know languages like Python, R? Don’t worry. If you can use the ‘functions’ of Excel, you are already familiar with the ALU of computer architecture.

This is a post aimed at aiding conceptual understanding by combining it with the macro aspect, so detailed explanations will be omitted.

Now, besides the ALU, let’s talk about each unfamiliar part one by one.

Innovation of Input/Output

If we first distinguish Input/Output devices in terms of their operational aspects, we can divide them into the device’s own operation (function) and the interface that enables that operation. The interface, in simple terms, is like communicating in Korean (interface) when asking someone who only speaks Korean to perform a certain task (function).

If you are a generation that remembers the era before smartphones, you will remember how many miscellaneous and diverse devices and interfaces there were in the world at that time. For example, if you wanted to connect a device to a PC, you had to install a device driver program every time. Automatic detection was later improved, but in the old days, you had to insert the CD included with the product and install it! (Not that I am that old 😅) I remember struggling a lot to connect the audio interface I purchased to my computer when I tried to work on MIDI.

So far, we have explained from the perspective of the user, let’s think from the perspective of developing a program product. It is necessary to develop considering all the various corner cases of the program, so the minimum resources required for software program development are quite large in terms of development period and resources. Also, because of these structural characteristics, Microsoft, which owns Windows OS, was able to maintain a monopolistic advantage. This was because the OS basically plays a platform role in connecting and controlling all device drivers.

An interface change example that is easiest to understand would be a display interface that anyone has probably encountered at least once through the use of a TV or monitor.

Also, the function of the display is changed in terms of image resolution(VGA,HD, Full HD,4K, UHD, etc.), fps(frame per second), picture quality enhancement, and its material.

In fact, the development of new materials and high-performance functions through innovation is valuable in itself for the device, so we are grateful for its progress. However, from the customer’s perspective, everyone has experienced inconvenience due to interface changes in the process. For example, when a newly purchased computer no longer supported the outdated VGA interface, we had to purchase a VGA-to-HDMI converter to use it.

Now, let’s take a look at our daily lives centered around smartphones, which we enjoy so naturally, from the perspective of input/output device innovation.

Although there are still various interfaces used to exchange data within the system, the main point here is solely from the user experience perspective. Physical input is limited to touch/voice, and physical output is limited to sound/display, with all other interfaces being wireless. (Apple’s sole 3-pin interface thing is out of scope here.)

Customers have become very comfortable, but from the perspective of developing software products, the complexity of product development has significantly decreased, making it possible for small-scale software product development that was previously only achievable by large companies, leading to the emergence of numerous startup myths.

Recently, there have been efforts by big tech companies to seize new upscale opportunities through the expansion of the Input/Output dimension using VR/AR/XR. However, personally, I feel that the various macro environments surrounding this I/O expansion are not quite ripe yet. From the perspective of providing value, it seems that there has not been a market where there was a significant opportunity somewhere but the I/O was not able to develop as a bottleneck. However, by playing a role in pulling new demands through this extended canvas, if innovation occurs in other areas considered as bottlenecks for this, it would be meaningful as a catalyst.

In the following post, I will cover topics related to memory units, control units, and innovations in AI.

Leave a reply to Applying Computer Architecture Concepts in Everyday Life(2) – Control/Memory (intro) – Kyunivers Cancel reply