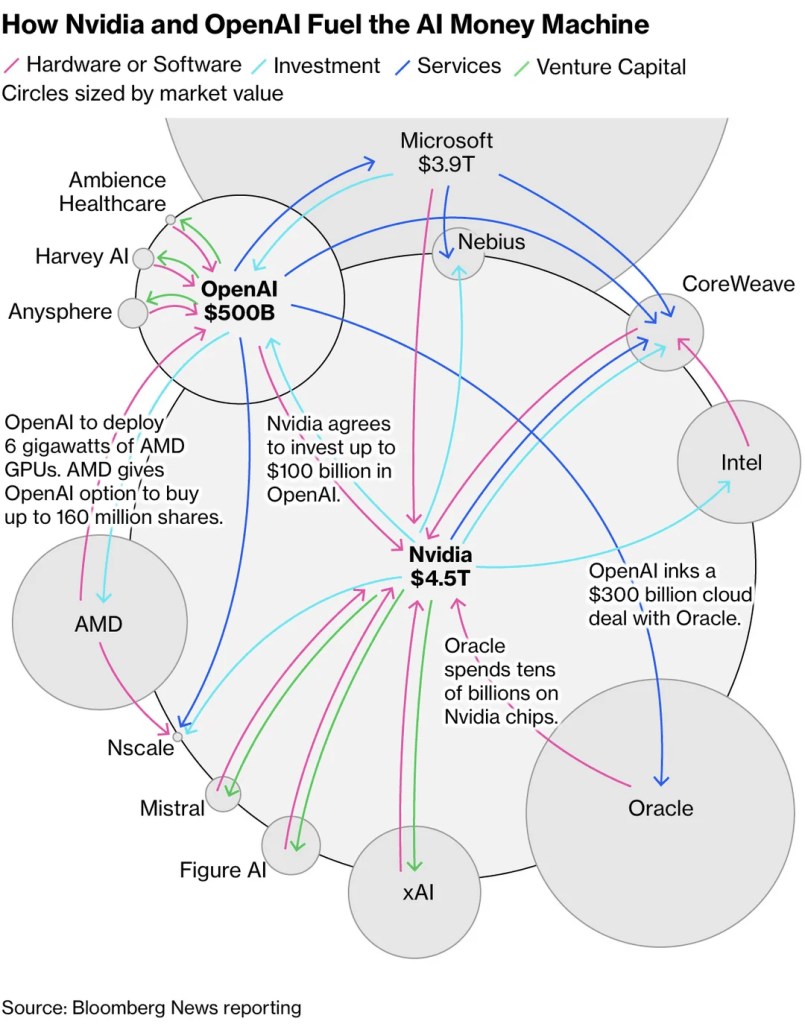

Recently, OpenAI has dominated headlines with its intricate web of circular investments. The structure is so complex and unprecedented that it has left many observers puzzled and even uneasy. The high level of interdependence between the players makes it look as though a single misstep could bring the entire system down. Meanwhile, a16z drew attention by announcing that Sam Altman would finally share his thoughts on AGI in their podcast.

I won’t cover the flood of news detailing who OpenAI struck deals with, how those deals are structured, or which tools and platforms it has recently unveiled by OpenAI – that information is already everywhere. Instead, let’s step back and look at the bigger structural story.

Naturally, different people interpret these moves through their own lenses shaped by their backgrounds and expertise. Some have asked for my take as well, but it’s not something that can be summed up in a few sentences. So in this issue, I’d like to lay out some of my thoughts here.

Rather than viewing this complex structure through the short-term flow of money, let’s look at where these players are all pointing. The circular investment pattern isn’t just about capital exchange. It reflects a shared conviction that we’re heading into the application era of AI.

They all recognize what’s coming: within the next two to three years, we’ll see an explosion of AI-generated data, content, and applications — and that’s when the real competition will begin. What we’re witnessing now is less about today’s profits and more about securing time advantage — building the compute infrastructure ahead of that wave. The smartest players are financing the bottleneck today to own the market tomorrow.

Compute as the new bottleneck

Every generational shift in IT begins with a breakthrough, then consolidates into distribution and application. The PC era pivoted on spreadsheets; the internet on browsers; mobile on the App Store. Each time, the eventual value came not from the core technology, but from what ordinary users could do with it.

OpenAI’s recent behavior echoes that inflection. It’s no longer about pushing the frontier of intelligence; it’s about embedding that intelligence. That’s the same realization Apple had around 2008: the product was no longer the device, it was the ecosystem.

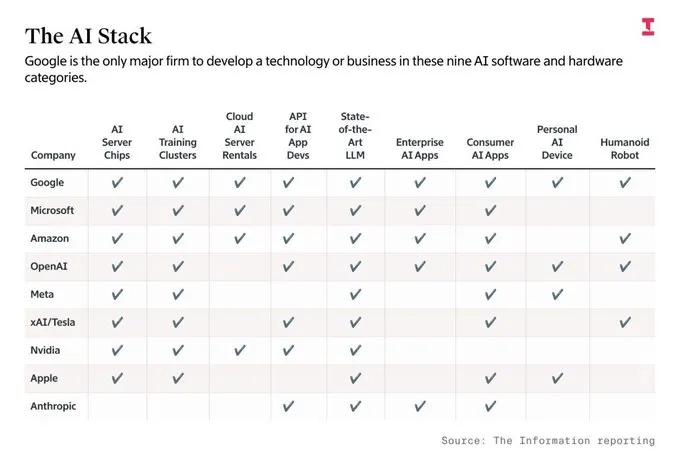

What’s different now is that the new “distribution” isn’t bandwidth or devices, but compute itself. NVIDIA, AMD, Oracle, and OpenAI are financing each other because they all recognize the same physical truth: without more computing, power, and data center, the application boom can’t happen

We’ve seen this before. Vendor financing was how the mobile boom escaped the 2008 recession. In 2009, Apple prepaid $500M to LG Display (LCD) and $500M to Toshiba (NAND), with $1.2B outstanding. That helped pull the ecosystem out of the 2008–09 slump and set the pace for smartphones. Carriers and export‑credit agencies also bankrolled network equipment for 3G/4G rollouts.

The logic is identical: solve your bottleneck early.

And here’s what makes this cycle even more interesting: hardware companies are the ones sitting on the cash this time. Their customers already front-loaded massive investments before their monetization cycles began. In other words, compute sellers got paid early; compute buyers haven’t yet entered the profit phase.

AI models are generating data and content at an insane rate. The volume of synthetic and real-time multimodal data keeps growing, which means the infrastructure appetite keeps compounding. For the AI ecosystem to move into the full-fledged application era, it needs a much bigger base of compute and fast.

That’s where the mismatch lies. Everyone knows that when the app wave hits, demand for high-performance compute will explode. But unlike software, silicon has physics-imposed lead times: 24–36 months to develop, tape out, and scale production. The leaders are betting precisely on that horizon. Leaders who did leadership role in the mobile S‑curve (2007–2014) recognize the pattern. They’re not just financing capacity; they’re timing it. They all believe the AI application explosion will arrive within that same two- to three-year window.

Politics & Geopolitics: New “Too Big To Fail”

The U.S. now treats AI as a national-security asset. Export controls on advanced chips to China and continuing White House and congressional actions point in one direction: this policy line will persist regardless of who’s in power. Allies are being asked to align as well.

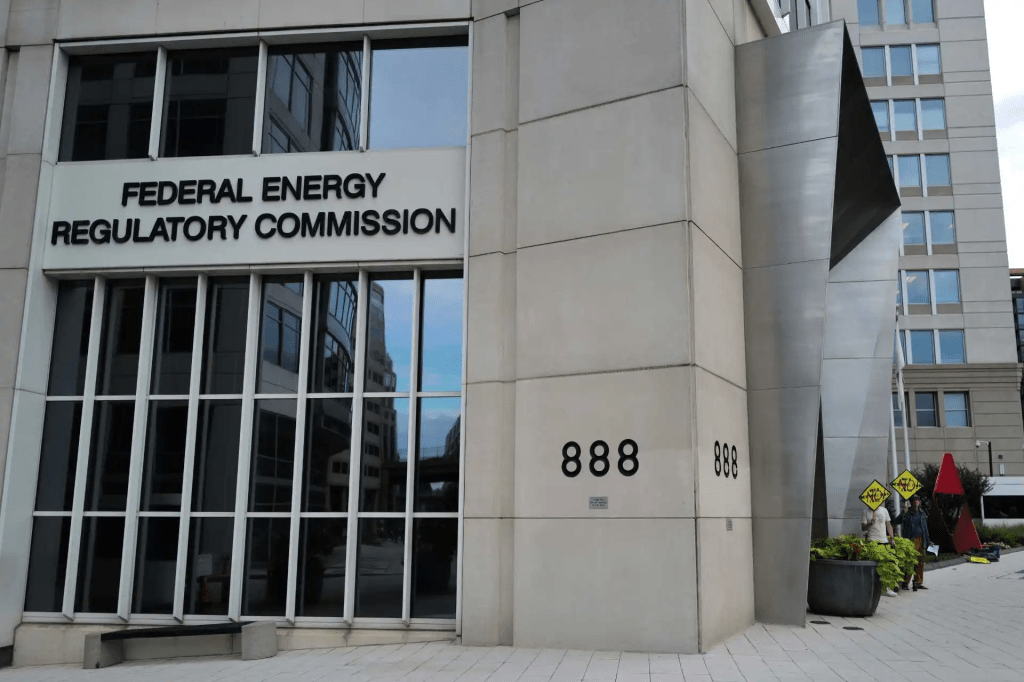

At present, grid interconnection reviews for large power users — such as AI data centers and semiconductor fabs — typically take many months to several years, with large-load projects often facing even longer delays. Just a few days ago, the U.S. Energy Secretary formally asked FERC to introduce a conditional fast-track process to accelerate these reviews — proposing that qualifying, high-priority projects such as AI data centers could complete their interconnection studies within 60 days. The goal is clear: to compress the physics- and permitting-driven lag so that the coming wave of AI applications has power when it arrives.

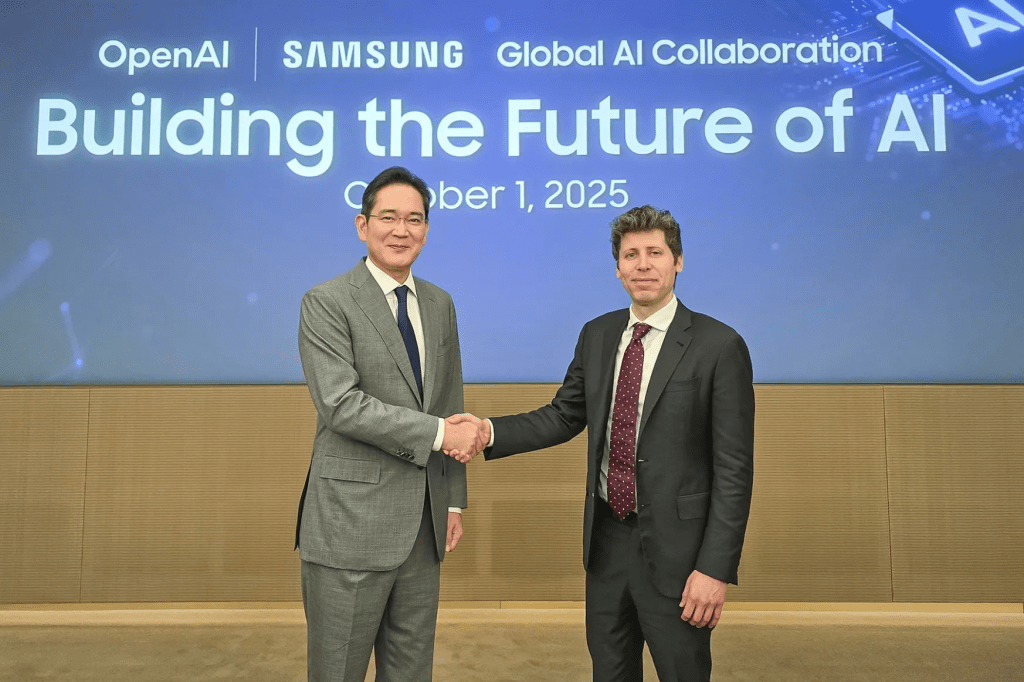

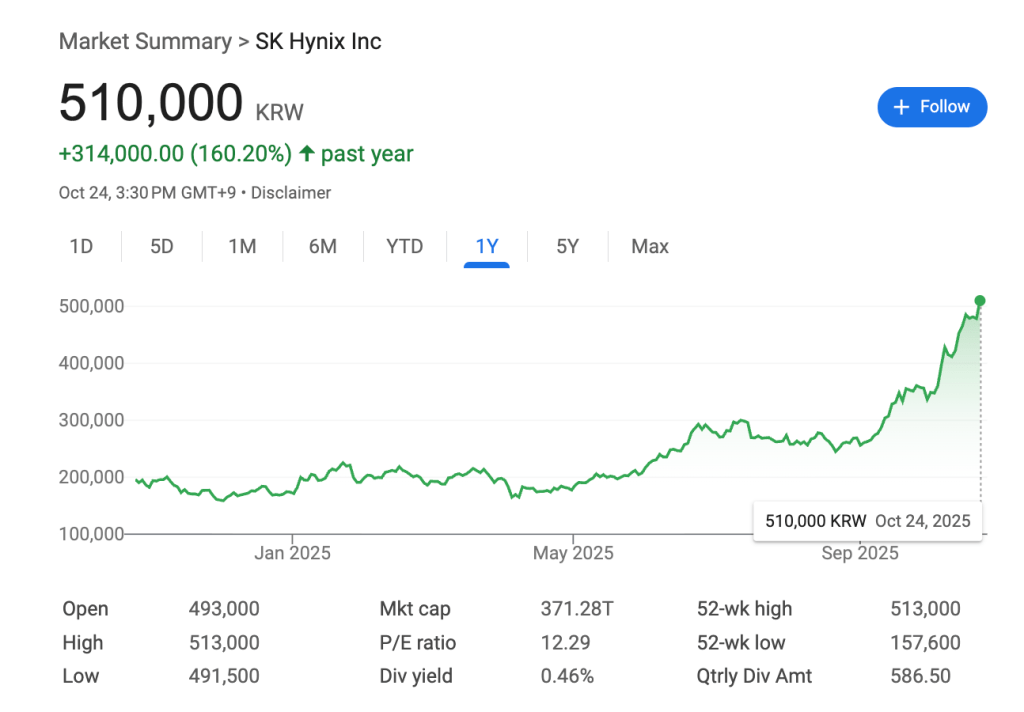

Allied nations are now part of this new too-big-to-fail architecture. South Korea is one of them. On Oct 1, 2025, OpenAI’s Sam Altman met President Lee Jae‑myung in Seoul and announced preliminary agreements with Samsung Electronics and SK hynix tied to OpenAI’s Stargate build‑out — including LOIs for large memory supply and local data‑center cooperation.

Subsequent coverage cited targets of up to 900K DRAM wafers/month to support AI infrastructure. To meet this surging demand, Korean chipmakers are expected to double existing plant capacity. Thus, The government is preparing legal revisions to allow major conglomerates to channel capital through affiliated funds, while additional financing from national strategic funds is also being arranged to support the expansion.

If you’re in the AI or investment space and haven’t been paying attention to Korea, now’s the time to start. This isn’t another story about K-pop idols or K-beauty exports — it’s about K-tech rising as a core pillar of this huge AI picture. Beyond geopolitics, Korea’s high disposable income and tech-savvy population make it one of the most attractive markets for AI adoption. Sam Altman isn’t the only one paying attention – many global leaders are.

BlackRock recently pledged to help make Korea the AI capital of Asia‑Pacific, signaling strong investment intent. Palantir’s Alex Karp, during his recent visit, even shared personal ties — his first girlfriend was Korean, his mother frequently visits the country, and she’s learning Korean. 🤣 During his visit, Palantir’s pop-up store sold out completely. Anthropic has also moved to establish a local presence, drawn by Korea’s unusually high per‑capita rate of paid AI‑tool users. And on the hardware side, Elon Musk has re‑entered the scene as a key partner for Samsung Foundry. In a recent investor briefing, he hinted at a complete overhaul of Tesla’s chip architecture and an expanded partnership with Samsung — pointing clearly toward a custom‑architecture future.

OpenAI is shifting from “model era” to “app era”

So, back to OpenAI…

The recent a16z interview made something clear: Altman seems to believe that, in terms of model development, OpenAI has reached a steady trajectory — a level of capability that meets the goals they originally aimed for. Having reached that point, he’s now stepping away from the philosophical tug-of-war around AGI itself.

Instead, his focus is shifting toward what comes next: building products that people willing to pay, and creating the infrastructure to sustain them.

The most interesting thing for me from the interview was his praise for Meta’s ad algorithm — acknowledging that while ads are often annoying, Meta’s system understands him so well that it feels almost helpful.

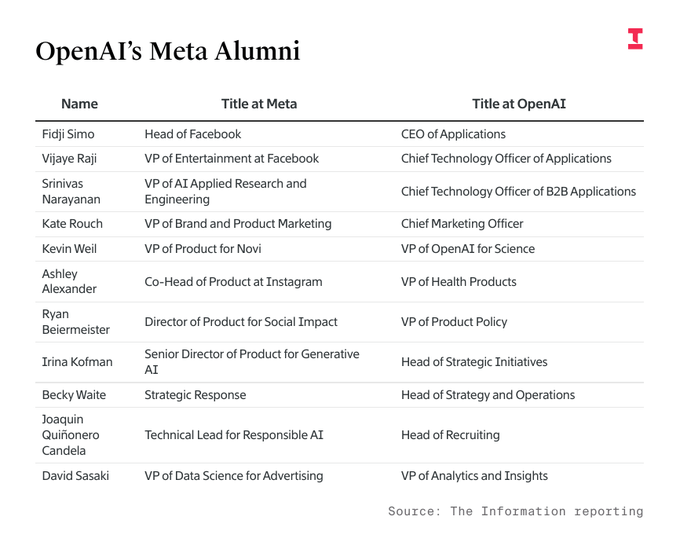

He also mentioned that Meta’s real strength lies in capturing people’s time and attention. Time spent is value created. That comment, combined with his recent frustration when an OpenAI engineer defected to Meta (a moment when he dismissed AI hype with clear irritation), hints at something deeper: Sam sees Meta as his main rival now.

Both companies are competing for the same thing — not only intelligence itself, but the surface where intelligence meets users. I came across this list which supports this movement.

In other words, OpenAI wants to be the Apple and the Meta of the AI era — owning both the platform and the engagement loop.

AI Models..

This piece is already getting long, but since I mentioned AI models in the last episode, let’s cover it briefly. 😅

The field has shifted from chasing benchmark scores to exploring how smaller models can achieve stronger performance. New research like about Agentic Context Engineering and Abstract Reasoning with Memoryshows that much of this progress depends on increasing memory access. It makes sense — the more frequently a model can recall prior information, the “smarter” it seems. But implementing that in hardware is another story.

Mathematically shrinking a model doesn’t guarantee it’s more efficient in practice. It can actually run slower or consume exponentially more power, making high-throughput operation physically impractical in system level. Deciding those trade-offs — between compute/memory/throughput/cost and power consumption — is where architectural work shines.

Ultimately, the next leap must happen at the memory-architecture level. Consider this: today’s NVIDIA GPU boom wouldn’t exist without HBM (High Bandwidth Memory). That innovation helped SK Hynix surpass Samsung in memory market share for the first time in history.

HBM itself was originally designed for efficient sensor data handling — high-bandwidth parallel processing of 2D data. The concept first came from Sony, followed by Samsung, both leveraging their camera businesses. Yet internal organizational friction caused Samsung to miss its moment, leaving it now racing to catch up with SK Hynix.

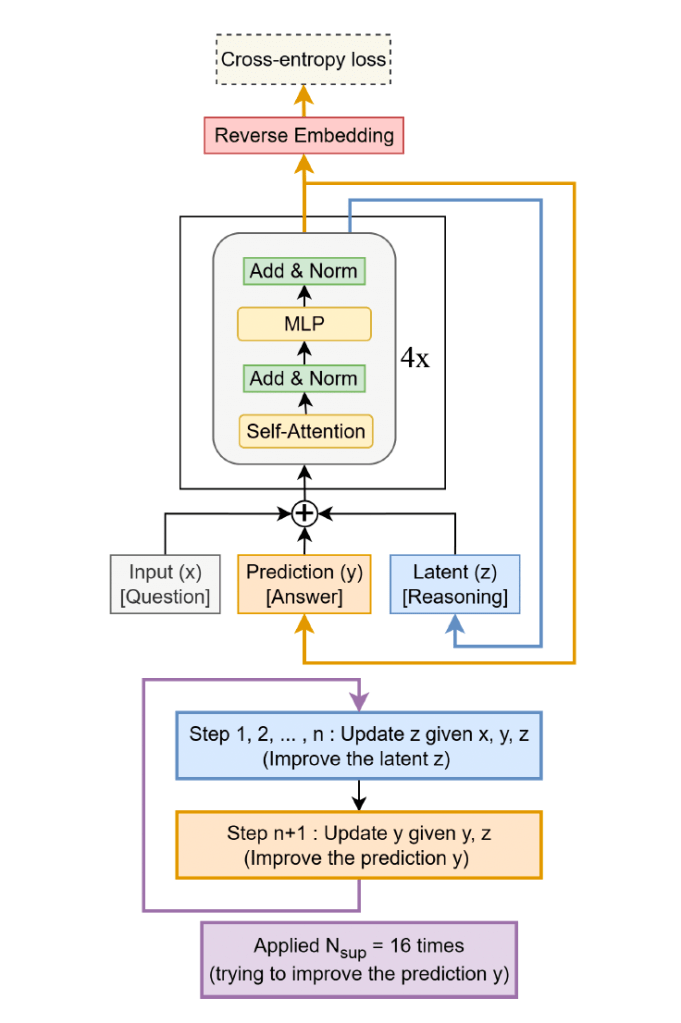

Recently, Samsung drew attention with its paper “Less is More: Recursive Reasoning with Tiny Networks.” As the title suggests, it’s another architecture heavy on memory operations. I found it fascinating precisely because it came from a company that both designs and fabricates memory chips. About five years ago, when I briefly discussed a long-term collaboration with a company starting with ‘H,’ I proposed a direction: embedding more compute into the memory stack than HBM. If someone like me was already thinking that way then, the real experts surely have been for years.

And just a few days ago, Elon Musk revealed that Tesla’s new AI5 chip removes traditional ISPs and legacy GPUs entirely — a full re-architecture. For Tesla, which must run AI efficiently on cars and humanoid robots, custom hardware is inevitable. It will be fascinating to watch how Tesla deepens its partnership with Samsung, the rare player that spans foundry, non-memory chip design, and memory design/manufacturing all under one roof.

In conclusion, unless major new breakthroughs emerge at the device level, progress for the next phase will center on application-specific optimization — designs tuned to concrete use cases. This direction will unfold alongside the expansion of AI applications themselves. After all, the phrase application-specific optimization only makes sense once a clear ROI can be defined for each application.

Final thoughts: the era of bold-minded startups!

We are living in a time when humanity can create the greatest value with the fewest resources. But because speed and scale now determine survival, even small startups must think and act with a big-company mindset — seeing further, moving faster, and building bigger. Otherwise, they risk fading out.

I’ve been saying this since the very beginning of my startup journey: startups today need to make decisions like corporations, not like the startups of a decade ago. Yet I still see people repeating those old startup manuals and calling it “mentoring.”

Thankfully, from day one, my co-founder and I have been completely aligned on the bigger picture — on where the world is heading and how we should move within it.

Every day, we’re executing on that shared vision.

As for how the market and business-strategy landscape are evolving — I’ll save that for the next episode!

• EON recently featured by Longevity.Technology, one of the world’s leading voices in the longevity space. The article dives into how EON is building an AI Operating system for Longevity.

• If you want to follow my thoughts on timely topics more often, follow me on X. I share key news and quick takes as they happen — sometimes in English, sometimes in Korean, depending on context. Thankfully, X now auto-translates all posts, so you won’t miss anything.

Leave a comment